Toni Erskine is Professor of International Politics in the Coral Bell School of Asia Pacific Affairs at the Australian National University (ANU).

This 2.5-year research project (2023 – 2025) is generously funded by an Australian Department of Defence Strategic Policy Grant.

Toni Erskine is Professor of International Politics in the Coral Bell School of Asia Pacific Affairs at the Australian National University (ANU).

The use of artificial intelligence (AI), machine learning, and automated systems has already changed the nature of the battlefield. The further diffusion of AI-enabled systems into states’ resort-to-force decision making is unavoidable for Australia, its allies, and its adversaries. In the United States, for example, machine learning techniques are already used in some intelligence analyses, which, in turn, contribute to decisions of whether and when to use force. While this contribution is currently limited and indirect, trends in other realms suggest that the use of AI-driven systems will increase in this high-stakes area. Separately, there is potential for AI-enabled automated systems to initiate escalatory defensive action in contexts such as the cyber realm. If we begin to consider the possible future effects of using these technologies in resort-to-force decision-making processes now, we can develop policy to guide their development and use, promote necessary education and training, and, ultimately, mitigate risks.

This two-year research project will have an important international collaborative dimension, which will include:

• two International Workshops on Anticipating the Future of War: AI, Automated Systems and Use-of-Force Decision Making, to be co-convened by Professor Steven E. Miller (Belfer Center, Harvard University) and Professor Toni Erskine (Coral Bell School of Asia Pacific Affairs, ANU) and held at the Australian National University (ANU) in Canberra, Australia in June 2023 and July 2024;

and

• a Policy Roundtable, also to be held at the ANU in Canberra in June/July 2024.

An international and interdisciplinary group of leading scholars – with backgrounds in political science, international relations (IR), mathematics, psychology, sociology, computer science, and philosophy – and military and AI/machine learning practitioners will participate in these activities and explore the risks and opportunities of introducing AI, machine learning, and automated systems into state-level use-of-force decision making. (Please see 'Project Participants.')

In addition to producing a series of published outputs, the project will be supplemented by an ‘AI, Automated Systems, and the Future of War' Public Lecture Series and a 'Discussing AI, Automated Systems, and the Future of War' Seminar Series. (For details of these two series, please see 'Forthcoming Events' and 'Past News & Events'. The papers from the June 2023 workshop will be published in the Australian Journal of International Affairs in early 2024.)

This research project will analyse emerging and disruptive technologies in the form of AI-enabled and automated systems used both to inform state-level decision making on the resort-to-force and, in some contexts – such as defence against cyberattacks – to make and directly implement decisions on the resort-to-force. In the former case, human decision makers draw on algorithmic recommendations and predictions to reach resort-to-force decisions; in the latter case, decisions are reached with or without human oversight. Both entail future-focused, but foreseeable, developments, which challenge existing rules and norms surrounding states' decisions to engage in organised violence and warrant immediate consideration.

Machine learning techniques enhance our decision-making capacities by analysing huge quantities of data quickly, predicting outcomes, calculating opportunities and risks, and uncovering patterns of correlation in datasets that are beyond human cognition. The potential benefits of using AI-enabled systems are clear in scenarios where predictive analyses of key strategic variables – such as anticipated threat, risk of inaction, proportionality of a potential response, and mission cost – are fundamental.

Yet there are also complications that would accompany reliance on these systems. This project will focus on the following:

When programmed to calculate – or automatically implement – a response to a particular set of circumstances, intelligent machines will behave differently than human agents.

This difference complicates understandings of deterrence. Current perceptions of a state’s willingness to use force in response to aggression are based on assumptions of human judgement (and forbearance) rather than automated calculations. The use of automated systems – which would make and implement decisions at speeds impossible for human actors – could result in unintended escalations in the use of force.

Empirical studies show that individuals and teams relying on AI-driven systems often experience ‘automation bias’ – the tendency to accept without question computer-generated outputs. This tendency can make human decision-makers less likely to use their own expertise and judgment to test the machine-generated recommendations.

Unintended consequences include acceptance of error, the de-skilling of human actors, and decreased compliance with international rules and norms of restraint in the use of force.

Machine learning processes are frequently opaque and unpredictable. Those who are guided by them often do not understand how predictions and recommendations are reached, and do not grasp their limitations. The current lack of transparency in much AI-driven decision making – ‘algorithmic opacity’ – has led to negative consequences across a range of contexts.

As governments’ democratic – and international – legitimacy requires compelling and accessible justifications for decisions to use force, algorithmic opacity poses grave concerns.

Studies in both International Relations (IR) and organisational theory reveal the existing complexities and pathologies of organisational decision making. AI-driven decision-support and automated systems intervening in these complex structures risks exacerbating these problems.

Without carefully developed guidelines, AI-enabled systems at the national level could distort and disrupt strategic and operational decision-making processes and chains of command.

These complications – and their potential implications for Australia’s defence policy – warrant serious attention. This project will bring together new voices and diverse perspectives – in the form of an international group of practitioners and multidisciplinary, world-leading scholars – to contribute to a comprehensive study of the risks and opportunities of introducing AI-enabled systems to state-level decisions to engage in war across these four thematic areas.

This project will initiate a much-needed, research-led discussion on the effects of AI-enabled systems in state-level resort-to-force decision making. It also seeks to significantly extend the public Australian strategic policy debate on the impacts of disruptive and emerging technologies.

August 2025 – Project CI Toni Erskine (ANU) and Steven E. Miller (Harvard) are guest editing a ‘Themed Issue’ of the exciting new Cambridge University Press journal, Cambridge Forum on AI: Law & Governance. The issue is entitled ‘AI and the Decision to Go to War’ and will highlight some of the original research first presented at the project’s July 2024 workshop at the Australian National University (ANU).

10 July 2025 – Four project participants presented papers from the project at the Oceanic Conference on International Studies (OCIS) in Sydney on a panel entitled ‘Computer Says, “War”: The Risks of AI in Resort-to-Force Decision Making’, followed by a great discussion with the audience.

Panel participants: Project CI Professor Toni Erskine (ANU); Dr Bianca Baggiarini (Deakin); Dr Sarah Logan (ANU); and Dr Neil Renic (UNSW Canberra). Dr Ben Zala (Monash University) chaired the panel.

20 June 2025 – Four project participants presented the results of their recent research at the British International Studies Association (BISA) 2025 50th Anniversary Conference in Belfast on a panel on ‘AI and the Decision to Wage War: Identifying and Mitigating Risks’. We shared insights drawn from the developed versions of research papers first presented at the July 2024 project workshop at The Australian National University (ANU).

Panel participants: Project CI Professor Toni Erskine (ANU); Dr Paul Lushenko (US Army War College), who was ably represented by Professor Anthony King (University of Exeter); Dr Mitja Sienknecht (European University Viadrina); and Dr Bianca Baggiarini (Deakin). Professor Nicholas Wheeler (University of Birmingham) chaired the panel.

5 June 2025 – Project participant Dr Mitja Seinknecht presented our Policy Recommendations to the German Foreign Ministry at a workshop on 'Integrating Perspectives on the Responsible Use of AI in the Military Domain'.

2 March 2025 – Panel at the International Studies Association (ISA) 66th Annual Convention in Chicago, USA. Six project participants presented the results of their recent research at the International Studies Association (ISA) 2025 annual convention in Chicago on a panel on ‘AI and the Initiation of War: Identifying and Mitigating Risks’. They shared insights drawn from the revised versions of their original research papers, first presented at the July 2024 project workshop at The Australian National University (ANU). The session was held at the Grand Ballroom of the Chicago Hilton Hotel (pictured below).

Panel participants: Project CI and Panel Chair Professor Toni Erskine (ANU); Professor Steven E. Miller (Harvard); Dr Mitja Sienknecht (European University Viadrina); Dr Paul Lushenko (US Army War College); and Professor Denise Garcia (Northeastern University).

19 February 2025 – Major General Mick Ryan delivered an outstanding public lecture on 'Supercharging Adaptation: AI & War in the 21st Century' at the Coral Bell School of Asia Pacific Affairs, followed by a lively discussion with the audience. The lecture, part of the project’s AI, Automated Systems, and the Future of War Public Lecture Series, was delivered to a full lecture theatre and chaired by Project CI Professor Toni Erskine.

Click here for a lecture flyer and abstract. A video recording of the lecture is available here.

11 February 2025 – Project CI Professor Toni Erskine hosted a delegation of Global Voices AU Policy Fellows and provided a briefing on the Anticipating the Future of War project in preparation for the Fellows’ participation in the AI For Good Global Summit in Geneva. Switzerland, in July 2025. Global Voices is a youth-led not-for-profit that upskills young (18–35) Australians in policymaking.

December 2024 – Congratulations to ANU Honours student Monique Fagioli who received a High Distinction for her dissertation on AI and resort-to-force decision making. Monique was supervised by Project CI Professor Toni Erskine and participated in the project’s July 2024 ‘Anticipating the Future of War’ workshop at ANU.

6 December 2024 – Project CI Professor Toni Erskine was interviewed by Antony Funnell on the ABC Radio National program Future Tense about her research on AI as part of the Anticipating the Future of War project. You can listen to the interview here from c. 08:55.

15 October 2024 - The sixth seminar in our Discussing AI, Automated Systems, and the Future of War Seminar Series was delivered by Dr Sarah Logan, Senior Lecturer in the Department of International Relations, Coral Bell School of Asia Pacific Affairs, at The Australian National University (ANU).

Dr Logan gave a superb presentation (to a very full room) based on some of her recent research as part of this project. Her presentation was entitled 'Tell Me What You Don't Know: Large Language Models and the Pathologies of Intelligence Analysis' and was followed by a great discussion with the audience.

Click here for the lecture flyer and full abstract. The audio recording of the seminar is available here.

9-10 September 2024 - Project CI Professor Toni Erskine spoke at the second Global Summit on Responsible AI in the Military Domain (REAIM) in Seoul, South Korea. She contributed to the high-level panel, “Responsible Military Use of AI: Bridging the Gap between Principles and Practice,” organised by the Carnegie Council on Ethics in International Affairs in collaboration with Oxford University's Institute for Ethics, Law and Armed Conflict, alongside Dr Brianna Rosen (University of Oxford), Dr Tess Bridgeman (New York University School of Law), Dr Paul Lyons (Senior Director for Defense, Special Competitive Studies Project), and Professor Kenneth Payne (King’s College London). The panel analysed ongoing efforts to operationalize and implement principles for the responsible use of military AI.

The Summit was hosted by the government of South Korea and co-sponsored with the governments of the Netherlands, Singapore, the United Kingdom, and Kenya. It brought together policymakers, industry representatives, and distinguished experts from around the world to discuss key opportunities, challenges, and risks associated with military applications of AI.

Click here for an op-ed on the panel, written by Dr Brianna Rosen.

26 July 2024 – We were grateful to have Professor Elke Schwarz from Queen Mary University of London present the fifth seminar in our ‘Discussing AI, Automated Systems, and the Future of War’ seminar series in the Mills Room, Chancelry Building. Professor Schwarz gave a compelling and provocative presentation entitled ‘Seated on the Whirlwind: Artificial Intelligence, Weapons Systems, and Moral Agency’ to a very engaged audience, followed by a brilliant discussion

Click here for the lecture flyer and full abstract. The video recording of the seminar is available here.

25 July 2024 - Our first Policy Roundtable, co-convened by Project CI Professor Toni Erskine (ANU) and Professor Steven E. Miller (Harvard), was held in the Chancelry Building on the campus of the Australian National University. Each paper presenter from the proceeding workshop participated in a roundtable session and outlined the policy recommendations drawn from their research in a concise five-minute presentation. Senior government delegates from the Departments of Defence, Foreign Affairs and Trade, Home Affairs, and Industry, Science, and Technology acted as 'Roundtable Commentators' for each session and valuably responded to the recommendations, raising questions and challenges, before the floor was opened to wider discussions.

Click here for the program, which includes the Policy Roundtable session descriptions and bios of the senior government delegates who participated as Roundtable Commentators.

23-24 July 2024 - Our second Anticipating the Future of War: AI, Automated Systems, and Resort-to-Force Decision Making Workshop was co-convened by Project CI Professor Toni Erskine (ANU) and Professor Steven E. Miller (Harvard) at the Australian National University in Canberra, Australia. Fifteen original research papers on the risks and opportunities of AI-enabled systems infiltrating resort-to-force decision making were presented and discussed.

Click here to see the full workshop program, including paper titles and speakers' bios.

(Overseas workshop participants also had the opportunity to experience some local wildlife and continue these discussions outdoors following the workshop.)

22 July 2024 - Our AI, Automated Systems, and The Future of War Public Lecture Series continued with an outstanding lecture delivered by Professor Ashley Deeks, Class of 1948 Scholarly Research Professor at the University of Virginia Law School. Professor Deeks spoke to a large and engaged audience at the Lotus Theatre in the China in the World Building on 'The Double Black Box: National Security, Artificial Intelligence, and the Struggle for Accountability'. Her important lecture was introduced by Professor Helen Sullivan, Dean of the College of Asia and the Pacific, and followed by a lively Q & A session with the audience, chaired by project CI Professor Toni Erskine.

Click here for the lecture flyer and full abstract. A video of the full lecture is available here.

13 June 2024 – The ‘Anticipating the Future of War: AI, Automated Systems, Resort-to-Force Decision Making’ project was highlighted in the online and print versions of the ANU Reporter in an article by Hannah Dixon. The article is available here, published in Volume 55, Number 1, Autumn 2024. The article includes reflections by project participants Bianca Baggiarini and Ben Zala and project CI Toni Erskine.

(The article also includes a link to the recording of the 2023 John Gee Memorial Lecture, which was delivered by Toni Erskine and entitled ‘Before Algorithmic Armageddon: The Immediate Risks of AI in War’.)

7 June 2024 – The British International Studies Association (BISA) annual conference was held in Birmingham, UK and included a Panel on ‘Anticipating the Future of War: AI, Automation, and Resort-to-Force Decision Making’. This session included participants from the 2023 workshop held in Canberra, who shared the insights from their workshop papers (which have been revised for publication in the April 2024 issue of the Australian Journal of International Affairs).

Panel participants: Professor Cian O’Driscoll (ANU) – Chair; Dr Lube Zatsepina - Discussant (Liverpool John Moores University); Professor Toni Erskine – Convenor and Participant (ANU), ‘Before Algorithmic Armageddon: Anticipating Immediate Risks to Restraint When AI Infiltrates Decisions to Wage War’; Dr Bianca Baggiarini (ANU), ‘Through a Glass, Darkly: Algorithmic War and the Dangers of (In)-Visibility, Anonymity, and Fragmentation’; and Professor Nicholas J. Wheeler (University of Birmingham), ‘The Role of Artificial Intelligence in Nuclear Crisis Decision-Making: A Complement, Not a Substitute’.

6 June 2024 - Thrilled to have been joined by Lieutenant Colonel Paul Lushenko, PhD, Assistant Professor and Director of Special Operations at the US Army War College, who presented the fourth SEMINAR in the 'Discussing AI, Automated Systems, and the Future of War' seminar series. His fascinating presentation on ‘Battlefield Trust for Human-Machine Teaming: Evidence from the US Military’ was followed by a very engaged and insightful discussion. Click here for the seminar flyer, speaker bio., and full abstract. The recording of the seminar is available here.

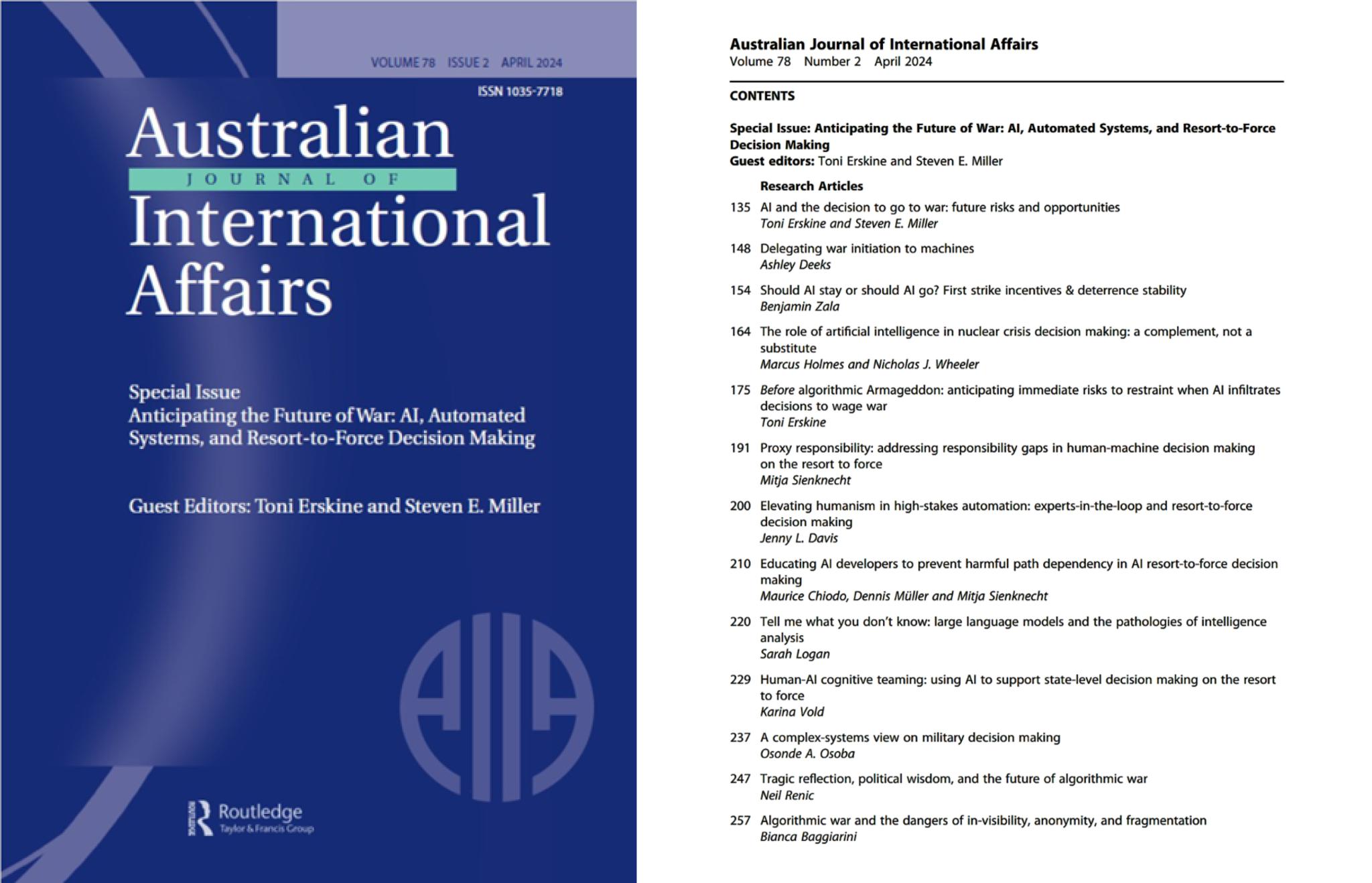

1 June 2024 – Thirteen articles, based on the project’s 2023 workshop papers, were published in a Special Issue of the Australian Journal of International Affairs (AJIA) entitled ‘Anticipating the Future of War: AI, Automated Systems, and Resort-to-Force Decision Making’, guest edited by Professors Toni Erskine and Steven E. Miller (Harvard).

The Introduction to the Special Issue is open access and available here.

3 April 2024 – Seven project participants presented work at the International Studies Association (ISA) annual convention in San Francisco, California on a Roundtable on ‘Anticipating the Future of War: AI, Automated Systems, and Resort-to-Force Decision Making’. The presenters shared insights from the 2023 workshop held in Canberra and from the original research papers written for the workshop, which have been extended, developed, and discussed over the intervening months.

The roundtable was a great success. Presenters spoke to a full room and a very engaged audience. Many people from the audience stayed on after the session finished to continue talking to members of the panel.

Roundtable participants: Professor Toni Erskine - Chair (ANU); Dr Justin Canfil - Discussant (Carnegie Mellon); Dr Mitja Sienknecht (European New School of Digital Studies); Professor Marcus Holmes (William & Mary); Dr Neil Renic (Copenhagen); Professor Denise Garcia (Northeastern); Dr Sarah Logan (ANU).

The panel members were also joined by co-convenor of the workshops, Professor Steven E. Miller (Harvard) for a post-panel dinner. Project CI, Toni Erskine and Steve Miller met over the course of the conference to make plans for the upcoming July 2024 project workshop, to be held at the Australian National University in Canberra, and to finalize the upcoming Special Issue of the Australian Journal of International Affairs that they are co-editing.

December 2023-January 2024 – We were pleased to be joined by Fionn Parker, a fifth-year PPE-Law student, who interned with the project over the 2023/2024 summer. Fionn is a recipient of the National University Scholarship, editor of the educational book ‘Catch Up with Top Achievers,’ and has been published in Australian Outlook and the ANU Undergraduate Research Journal. His research interests lie at the intersection between legal theory, international law, and constitutional law, focussing on themes and models of legitimacy and their evolution in the 21st century. While Intern with the project, Fionn provided research and editorial assistance for the project’s Special Issue with the Australian Journal of International Affairs and conducted his own original research project on ‘Autonomous Defence Systems, State Practice, and Opinio Juris: A Worrying Potentiality for International Law’ under Professor Toni Erskine’s supervision.

4 December 2023 - The third SEMINAR in the ‘Discussing AI, Automated Systems, and the Future of War Seminar Series’ was given by Dr Benjamin Zala (ANU) on ‘Should AI Stay or Should AI Go? First Strike Incentives & Deterrence Stability in the Third Nuclear Age’. This thought-provoking presentation was followed by a lively discussion with the audience and was a great way to conclude our activities for 2023.

Click here for the lecture flyer and full abstract.

28 November 2023 - Project CI Professor Toni Erskine was invited to give the 2024 John Gee Memorial Lecture.

Professor Erskine delivered the 15th Annual John Gee Memorial Lecture on ‘Before Algorithmic Armageddon: The Immediate Risks of AI in War’ to a full lecture theatre. She followed an esteemed group of previous John Gee Memorial Lecturers, including the Hon. Malcolm Fraser, Professor Ramesh Thakur, and Professor Gareth Evans.

In her lecture, Professor Erskine maintained that we are overlooking grave risks that accompany our increasing reliance on artificial intelligence in war. She argued that ‘a neglected danger that AI-enabled weapons and decision-support systems pose is that they change how we (as citizens, soldiers, and states) deliberate, how we act, and how we view ourselves as responsible agents’. With reference to our interaction with particular AI-enabled systems in war, Professor Erskine demonstrated how this could, in turn, have ‘profound ethical, political, and even geo-political implications – well before AI evolves to a point where some fear that it could initiate algorithmic Armageddon’.

Click here for the lecture flyer and abstract. A video of the full lecture is available here.

27 September 2023 - We were fortunate to have Dr Miah Hammond-Errey, Director of the Emerging Technology Program at the United States Studies Centre, University of Sydney, present the second SEMINAR in the 'Discussing AI, Automated Systems, and the Future of War' seminar series. She spoke about her fascinating research on 'The Impact of Big Data and Emerging Technologies on National Security, Intelligence Production, and Decision Making' to a very full Mills Room in the Chancelry Building, followed by a great discussion. Click here for the seminar flyer, speaker bio., and full abstract.

29 - 30 June 2023 - A two-day WORKSHOP, 'Anticipating the Future of War: AI, Automated Systems, and Resort-to-Force Decision Making', was co-convened by Professor Toni Erskine (ANU) and Professor Steven E. Miller (Harvard) at the Australian National University in Canberra. Eighteen leading scholars with backgrounds in computer science, AI and machine learning, mathematics, sociology, philosophy, political science, psychology, and international relations presented and discussed sixteen original papers. Each paper explored one of the four 'complications' (outlined in the project description above) of AI-enabled automated systems and decision-support systems being used in the context of state-level resort-to-force decision making. Click here to view the workshop program.

28 June 2023 - In an outstanding inaugural LECTURE for the new Public Lecture Series, 'AI, Automated Systems, and the Future of War', Professor Sarah Kreps, John L. Wetherill Professor of Government and Director of the Tech Policy at Cornell University, spoke to a full lecture theatre on ‘Weaponizing ChatGPT? National Security and the Perils of AI-Generated Texts in Democratic Societies’. In this talk, Professor Kreps presented a range of original experimental evidence and offered guidance on how to harness the prospects, and guard against the perils, of generative AI. Click here for lecture flyer and full abstract. A video of the full lecture is available here.

27 June 2023 - In the first SEMINAR of the new Seminar Series, 'Discussing AI, Automated Systems, and the Future of War', Retired Major General Mick Ryan gave an illuminating and insightful talk on ‘Thinking About Future War: Drones, Mass, and Other Trends’. He explored key military trends that have underpinned the conduct of the Ukraine and Russia war, and how the explosive change in key trends such as human-machine teaming, strategic influence, and mass warfare will drive further changes in the character of 21st century competition and conflict. Click here for the seminar flyer and full abstract.

6 March 2023 - The KEYNOTE ADDRESS at the Chancellor’s International Women’s Day Lunch, University of South Australia, was delivered by Project CI Professor Toni Erskine on ‘AI and the Risk of Misplaced Responsibility in War’. She addressed the risks that accompany our propensity to attribute to intelligent artefacts – including AI-enabled weapons and decision-support systems – capacities that they do not have and assume that they are able to bear moral responsibilities of restraint in war.

Special issue of the Australian Journal of International Affairs (AJIA) — Anticipating the Future of War: AI, Automated Systems, and Resort-to-Force Decision Making, guest edited by Toni Erskine and Steven E. Miller. Vol. 78, No. 2 (2024).

She is Chief Investigator of the ‘Anticipating the Future of War: AI, Automated Systems, and Resort-to-Force Decision Making’ Research Project, funded by the Australian Government through a grant by Defence. She also serves as Academic Lead for the United Nations Economic and Social Commission for Asia and the Pacific (UN ESCAP)/Association of Pacific Rim Universities (APRU) ‘AI for the Social Good’ Research Project and in this capacity works closely with government departments in Thailand and Bangladesh. She has recently served as Director of the Coral Bell School at the ANU (2018-23) and Editor of International Theory: A Journal of International Politics, Law & Philosophy (2019-23). Her research interests include the impact of new technologies (particularly AI) on organised violence; the moral agency and responsibility of formal organisations in world politics; the ethics of war; the responsibility to protect vulnerable populations from mass atrocity crimes; and the role of joint purposive action and informal coalitions in response to global crises. She is the recipient of the International Studies Association’s 2024-25 Distinguished Scholar Award in International Ethics.

Previously, he was Senior Research Fellow at the Stockholm International Peace Research Institute (SIPRI) and taught Defense and Arms Control Studies in the Department of Political Science at the Massachusetts Institute of Technology. He is editor or co-editor of more than two dozen books, including, most recently, The Next Great War? The Roots of World War I and the Risk of U.S.-China Conflict. Professor Miller is a Fellow of the American Academy of Arts and Sciences, where he is a member of their Committee on International Security Studies (CISS). He currently leads the Academy’s project on Promoting Dialogue on Arms Control and Disarmament. He is also co-chair of the U.S. Pugwash Committee and a member of the Council of International Pugwash.

Bianca’s current research is on the sociopolitical and ethical impacts of autonomy and AI-enabled technologies in military and security contexts. She is examining the role of trust discourse in shaping debates about ethical military AI (arguing that machine learning algorithms naturally agitate rules- and standards-based orders, thereby challenging the possibility of trust), the changing status of soldiers’ labour in response to increasing autonomy, and the social meaning of technology demonstrations as it relates to communicating the ethical and legal potential of AI-enabled systems. Her forthcoming monograph, Governing Military Sacrifice, is one of the first books to connect the rise of drones and combat unmanning with military and security privatization and includes original interview data from both drone advocates and critics alike. Bianca holds a PhD (2018) from York University in Toronto, an MA in sociology from Simon Fraser University, and a BA in political science from Simon Fraser University. From 2019 to 2021, she was a Researcher at UNSW at the Australian Defence Force Academy.

From 2024-2025, he will take leave to complete a Stanton Nuclear Security Fellowship at the Council on Foreign Relations. Dr. Canfil's research interests concern the impact of emerging technologies on international law and arms control, both past and present. His research has appeared in outlets such as the Journal of Cybersecurity and the Oxford Handbook on AI Governance. He received a Fulbright Scholarship to conduct doctoral research in China and a PhD in Political Science from Columbia University. He can be reached at [www.jcanfil.com](http://www.jcanfil.com/) or on Twitter @jcanfil.

His research looks at the ethical issues arising in all types of mathematical work, including AI, blockchain, finance, modelling, surveillance, cryptography, and statistics. Since 2016 he has been running an annual seminar series on ethics in mathematics as part of the Cambridge University Ethics in Mathematics Society, and sat on the ethics advisory group of Machine Intelligence Garage UK for 3 years. He has released "Manifesto for the Responsible Development of Mathematical Works", and is currently writing a monograph Ethics for the Working Mathematician, both of which are the first works of their kind. Maurice comes from a background in research mathematics, holding two PhDs in mathematics from the University of Cambridge and the University of Melbourne on problems in algebra and computability theory. He has over 20 years experience studying, working, and teaching, in mathematics departments around the world.

Her book, How Artifacts Afford: The Power and Politics of Everyday Things (MIT Press 2020), provides an operational framework specifying how technological design reflects and shapes individuals and societies. With particular attention to AI, big data, and algorithmic systems, Jenny maintains active collaborations within and outside of academia, applying a sociological lens to the ways data-driven infrastructures infuse our social worlds. She is currently working to establish algorithmic reparation as an alternative to algorithmic fairness, displacing neutrality with reparative justice.

She writes about the use of force, executive power, secret treaties, and the intersection of national security and AI, and she is the co-author of a leading casebook on foreign relations law. She is an elected member of the American Law Institute, a member of the State Department’s Advisory Committee on International Law, and a contributing editor to the Lawfare blog. She recently served as Special Assistant to the President, Associate White House Counsel, and Deputy Legal Advisor to the U.S. National Security Council. Before joining UVA, she served for ten years in the U.S. State Department’s Office of the Legal Adviser, including as the embassy legal adviser at the U.S. Embassy in Baghdad during Iraq’s constitutional negotiations. Professor Deeks received her J.D. with honors from the University of Chicago Law School, where she was elected to the Order of the Coif and served as an editor on the Law Review. After graduation, she clerked for Judge Edward R. Becker of the U.S. Court of Appeals for the Third Circuit.

He is also co-director of the Social Science Research Methods Center and director of the Political Psychology and International Relations lab, both at William & Mary. He is Principal Investigator on a US Department of State grant exploring the effect of people-to-people exchanges in US-Japan relations through the lens of baseball diplomacy over the last 150 years. His research explores various aspect of diplomacy, including dynamics of interpersonal relationship in international politics. He is the author of Face-to-Face Diplomacy: Social Neuroscience in International Relations, an award-winning book with Cambridge University Press. Holmes also is co-editor, with Corneliu Bjola, of a seminal volume on the use of social media in achieving diplomatic ends, Digital Diplomacy: Theory and Practice, with Routledge. His latest book project, Personal Chemistry, is co-authored with Nicholas J. Wheeler and is under contract at Oxford University Press.

Her work lies at the intersection of technology, politics, and national security, and is the subject of five books and a range of publications published in academic journals such as the New England Journal of Medicine, Science Advances, Vaccine, Journal of the American Medical Association (JAMA) Network Open, American Political Science Review, and Journal of Cybersecurity, policy journals such as Foreign Affairs, and media outlets such as CNN, the BBC, New York Times, and Washington Post. She has a BA from Harvard University, MSc from Oxford, and PhD from Georgetown. Between 1999-2003, she served as an active duty officer in the United States Air Force.

Her research interests include the future of open source intelligence; the governance of international data transfers; the development of global privacy norms; and the geopolitics of global technology standards. Her work has been funded by the Annenberg School for Communication, the Australian government, and the United Nations Economic and Social Commission for Asia and the Pacific/Association of Pacific Rim Universities. Her first book, Hold Your Friends Close: Countering Radicalization in Britain and America, was published by Oxford University Press in 2022.

Recurring themes in his work include algorithmic equity, modelling for decision support, and modelling the behaviours of social agents. Dr Osoba is currently a senior AI engineer on Fairness at LinkedIn where he works on helping enable the platform’s use of AI/ML in a responsible and trustworthy manner. Prior to LinkedIn, Osoba was a senior information scientist at the RAND Corporation and a professor of public policy at the Pardee RAND Graduate School. His policy research portfolio at RAND focused on AI/ML applied to problems in social and economic well-being and national security. At the Pardee RAND Graduate School, Osoba was the Associate Director for Tech & Narrative Lab, helping to pioneer a novel program in training the next generation of effective and creative tech policy thought leaders. Dr Osoba earned his B.Sc. in Electrical and Computer Engineering from the University of Rochester and his Ph.D. in Electrical Engineering from USC.

Mick established the Australian Army Research Scheme in 2013, was inaugural President of the Defence Entrepreneurs Forum (Australia) and is a member of the Military Writers Guild. He is a keen author on the interface of military strategy, innovation, and advanced technologies, as well as how institutions can develop their intellectual edge. In February 2022, Mick retired from the Australia Army. In the same month, his book War Transformed was published by USNI Books. His latest book, White Sun War: The Campaign for Taiwan, was published on 4 May 2023 by Casemate Books. His next book, about strategy and adaptation in the Ukraine War, will be published on 13 August 2024. Mick runs his own strategic advisory company and is a strategic advisor to several Australian and U.S. companies. He has written regular columns for the Sydney Morning Herald, ABC Australia and Foreign Affairs and is a frequent commentator on ABC TV, the BBC and CNN. Mick is currently the inaugural Senior Fellow for Military Studies at the Lowy Institute in Sydney, and an adjunct fellow at the Center for Strategic and International Studies in Washington DC.

Renic’s current work evaluates the practical and moral challenges of emerging and evolving technologies such as drone and autonomous violence. He is the author of “Asymmetric Killing: Risk Avoidance, Just War, and the Warrior Ethos” (Oxford University Press 2020) and has published extensively in academic journals, including The European Journal of International Relations, Survival, Ethics and International Affairs, and the Bulletin of the Atomic Scientists.

Her paper on the debordering of intrastate conflicts based on her PhD received the best paper award in International Relations (IR) of the IR-section of the German Political Science Association. Her research interests include the (digital) transformation of violence and conflicts; border- and boundary studies; the responsibility of state and non-state actors in world politics; and inter- and intra-organizational decision-making in security contexts. Her work is situated at the intersection of IR, peace and conflict studies and science & technological studies (STS). In her current research, Mitja analyzes the impact of digitalization on armed conflicts and collaborates in developing and training an AI to identify argumentative structures in IR theories.

Vold specialises in Philosophy of Cognitive Science and Philosophy of Artificial Intelligence, and her recent research has focused on human autonomy, cognitive enhancement, extended cognition, and the risks and ethics of AI.

He is a non-resident Senior Fellow at BASIC (the London based NGO that works on international trust-building and nuclear diplomacy) where is he the academic lead on the BASIC-ICCS Nuclear Responsibilities Programme. His publications include (with Ken Booth) The Security Dilemma: Fear, Cooperation, and Trust in World Politics (Palgrave Macmillan, 2008); Saving Strangers: Humanitarian Intervention in International Society (Oxford: Oxford University Press, 2000); and Trusting Enemies: Interpersonal Relationships in International Conflict (Oxford University Press 2018). He is a Fellow of the Academy of Social Sciences in the United Kingdom, a Fellow of the Learned Society of Wales, and has had an entry in Who’s Who since 2011.

His work has appeared in over a dozen different peer-reviewed journals such as Review of International Studies, Journal of Global Security Studies, Third World Quarterly and the Bulletin of the Atomic Scientists. His book Power in International Society: A Perceptual Approach to Great Power Politics is under contract with Oxford University Press and his edited volume, National Perspectives on a Multipolar Order, was published by Manchester University Press in 2021. He has been a Stanton Nuclear Security Fellow in the Belfer Center for Science & International Affairs at Harvard University and has previously held positions in the UK at the Oxford Research Group, Chatham House, and the University of Leicester where he is also currently an Honorary Fellow working with the European Research Council-funded, Third Nuclear Age project (https://thethirdnuclearage.com/).

His work lies at the intersection of emerging technologies, politics, and national security, and he also researches the implications of great power competition for regional and global order-building. Paul is the author and editor of three books, Drones and Global Order: Implications of Remote Warfare for International Society (2022), The Legitimacy of Drone Warfare: Evaluating Public Perceptions (2024), and Afghanistan and International Relations (under contract with Routledge). Paul has written extensively on emerging technologies and war, publishing in academic journals, policy journals, and media outlets such as Security Studies, Foreign Affairs, and The Washington Post.

She is the author of Death Machines: The Ethics of Violent Technologies (Manchester University Press), an RSA Fellow, a member of the International Committee for Robot Arms Control (ICRAC), 2022/23 Fellow at the Center for Apocalyptic and Post-Apocalyptic Studies (CAPAS) in Heidelberg and 2024 Leverhulme Research Fellow. Her work has been published in a number of philosophical and security focused journals, including Ethics and International Affairs, Philosophy Today, Security Dialogue, Critical Studies on Terrorism, and the Journal of International Political Theory. She is co-series editor for the Springer Verlag series: Frontiers in International Relations and Associate Editor for the Journal New Perspective.

Her recent book is called Big Data, Emerging Technologies and Intelligence: National Security Disrupted. Dr Hammond-Errey spent eighteen years leading federal government analysis and communications activities in Australia, Europe, and Asia and was awarded an Operations Medal. She previously established the Emerging Technology Program at the US Studies Centre at the University of Sydney and ran the information operations team at the Australian Strategic Policy Institute. She is a member of the Australian Institute of Company Directors and is currently affiliated with both the University of Sydney, where she teaches postgraduate cyber security, and the Deakin University Cyber Research and Innovation Centre, where she publishes on AI and cyber security.

After postdoctoral work at both Yale and CSIRO, she joined ANU in 2012, where she held an ARC DECRA Fellowship in nuclear reactions before switching over to research and teaching related to safety-critical systems design. She was convenor of the Algorithmic Futures Policy Lab, which was supported by the Erasmus+ Programme of the European Union. She is also creator, co-host, and co-producer of the Algorithmic Futures Podcast.

Dr Assaad is a member of the expert advisory group for the global commission on responsible AI in the military domain. She was named one of the “Top 10 Women in AI” in the Asia-Pacific by AI Magazine. She received the 2023 Women in AI Award for Defence, the 2023 Women to Watch in Emerging Aviation Technologies Global Award and was named one of the '"100 Brilliant women in AI Ethics" for 2023. She was also a Top 5 Science Resident with the ABC and is the co-host of the Algorithmic Futures podcast.

At present, she is working on a book manuscript that examines the history of the anti-nuclear posture of Scotland. Her second research area centres on the intersection of AI and critical security, specifically examining how AI advancements reflect states’ identity politics and impact their security practices. Luba holds a PhD in politics from the University of Edinburgh. Previously, she held a teaching position at the University of Edinburgh and a research position in proliferation and nuclear policy at the Royal United Services Institute for Defence and Security Studies (RUSI).

In 2022, he was on sabbatical at Cambridge University’s Centre for the Study of Existential Risk. Current research interests include strategic studies; futures literacy and existential risks; Britain’s defence cooperation and military exercises with Japan as part of the Indo-Pacific “tilt”.

Dennis is also a professionally trained software developer and IT systems administrator. In 2022, Dennis held a Design and Technology Fellowship at Auschwitz for the Study of Professional Ethics (FASPE), where he studied the role that engineers and other technologists played during the Holocaust. Dennis is a founding member of the Ethics in Mathematics Project at Cambridge University and has previously served as the President of the Cambridge University Ethics in Mathematics Society. His current research focuses on ethics in mathematics and its education, and responsible use of AI and operations research.

She received the Distinguished Scholar award from the International Studies Association in 2018 and was a co-winner of the 2003 American Political Science Association Jervis and Schroeder Award for best book in International History and Politics for her book Argument and Change in World Politics: Ethics, Decolonization, Humanitarian Intervention (CUP, 2002). Professor Crawford’s most recent publication is The Pentagon, Climate Change, and War (MIT Press, 2022). She is also working on To Make Heaven Weep: Civilians and the American Way of War. She has also authored several other books including, Accountability for Killing: Moral Responsibility for Collateral Damage in America’s Post‑9/11 Wars (2013). She is a co-founder and co-director of the ‘Costs of War Project’, based at Brown University.

Director of the Defence Intelligence Organisation, and Head of the National Assessments Staff in the National Intelligence Committee.

He is the author of 5 books and 4 reports to government, as well as more than 150 academic articles and monographs about the security of the Asia-Pacific region, the US alliance, and Australia’s defence policy. He wrote the 1986 Review of Australia’s Defence Capabilities (the Dibb Report) and was the primary author of the 1987 Defence White Paper.

She develops legal and philosophical theories about how international law can be an instrument of morality in war, albeit an imperfect one. She also studies how normative considerations shape public opinion on the use of force and the attitudes of conflict-affected populations. In 2021, she won a Philip Leverhulme Prize for work on the moral psychology of war. She currently co-convenes (with Scott Sagan) a research project on the "Law and Ethics of Nuclear Deterrence," which is part of the Research Network on Rethinking Nuclear Deterrence, funded by the MacArthur Foundation.

He is a Fellow of the Academy of Social Sciences in Australia and former editor of the Australian Journal of Political Science. Working in both political theory and empirical social science, he is best known for his contributions in the areas of democratic theory and practice and environmental politics. One of the instigators of the ‘deliberative turn’ in thinking about democracy, he has published eight books in this area with Oxford University Press, Cambridge University Press, and Polity Press. His work in environmental politics and climate governance has yielded seven books with Oxford University Press, Cambridge University Press, and Basil Blackwell. He has also worked on comparative studies of democratization, post-positivist public policy analysis, and the history and philosophy of social science. His current research emphasizes global justice, governance in the Anthropocene, and confronting contemporary challenges to democracy.

She is a board member of the Japan Deep Learning Association (JDLA). She is also a member of the Council for Social Principles of Human-centric AI, The Cabinet Office, which released “Social Principles of Human-Centric AI” in 2019. Internationally, she is an expert member of the working group on the Future of Work, GPAI (Global Partnership on AI). She obtained a Ph.D. from the University of Tokyo and previously held a position as Assistant Professor at the Hakubi Center for Advanced Research, Kyoto University. She was named the University of Tokyo Excellent Young Researcher, 2021.

Institute (Tokyo) and the Institute for Economics and Peace (Sydney), Vice-chair of the International Committee for Robot Arms Control, and member of the Institute of Electrical and Electronics Engineers Global Initiative on Ethics of Autonomous and Intelligent Systems. She was the Nobel Peace Institute Fellow in Oslo in 2017. A multiple teaching award-winner, her recent publications appeared in Nature, Foreign Affairs, International Relations, and other top journals. Her upcoming book is Common Good Governance in the Age of Military Artificial Intelligence with Oxford University Press 2023.

She is also an Adjunct Professor at the Fletcher School of Law and Diplomacy, where she teaches a graduate seminar on the role of nuclear weapons in the 21st century and a core course on Technology, Public Policy, and National Security. She is the leading faculty of the Fletcher School Executive Education course on “Negotiating Technology Agreements in Emerging Markets: Developing Strategic capacities for accessing transformative technologies”. Dr Giovannini served as a Senior Strategy and Policy Officer to the Executive Secretary of the Comprehensive Nuclear Test Ban Treaty Organization (CTBTO). Before her international appointment, she served five years at the American Academy of Arts and Sciences in Boston as the Director of the Research Program on Global Security and International Affairs. With a Doctorate from the University of Oxford, Dr Giovannini began her career working for international organisations. She has published widely in Nature, the Bulletin of Atomic Scientists, Arms Control Today, the National Interest, and The Washington Post, among others.

His published work examines the development of the just war tradition over time and the role it plays in circumscribing contemporary debates about the rights and wrongs of warfare. These themes are reflected in his two monographs: Victory: The Triumph and Tragedy of Just War (Oxford: Oxford University Press, 2019) and The Renegotiation of the Just War Tradition (New York: Palgrave, 2008). He has also co-edited three volumes and his work has been published in leading journals in the field, including International Studies Quarterly, the European Journal of International Relations, the Journal of Strategic Studies, the Journal of Global Security Studies, Review of International Studies, Ethics & International Affairs, and Millennium. Cian is a co-editor of the Review of International Studies.ory Board.

diagnosis, and search, their integration with optimisation, machine learning, and verification, as well as their applications to energy and transport. Her recent work, which has received multiple academic and industry awards, focuses on handling constraints in planning under uncertainty, on integrating deep learning with search to solve planning problems, and on coordinating distributed energy resources to benefit their owners, the distribution grid, and energy markets. Sylvie is a fellow of the Association for the Advancement of Artificial Intelligence (AAAI) and a co-Editor in Chief of the Artificial Intelligence journal. She is a former Councilor of AAAI, co-Chair and President of the International Conference on Automated Planning and Scheduling (ICAPS), and Director of the Canberra Laboratories of NICTA, home to 150 researchers and PhD students.

He has been a Resident Associate of the Carnegie Endowment for Peace and a Guest Scholar at the Brookings Institution, and he has also served as a consultant for the Institute of Defense Analyses, the Center for Naval Analyses, and the National Defense University. He presently serves on the editorial boards of Foreign Policy, Security Studies, International Theory, International Relations, and Journal of Cold War Studies, and he also serves as Co-Editor of the Cornell Studies in Security Affairs, published by Cornell University Press. Additionally, he was elected as a Fellow in the American Academy of Arts and Sciences in May 2005. His book The Israel Lobby and U.S. Foreign Policy (Farrar, Straus & Giroux, 2007, co-authored with John J. Mearsheimer) was a New York Times best seller and has been translated into more than twenty foreign languages. His most recent book is The Hell of Good Intentions: America’s Foreign Policy Elite and the Decline of U.S. Primacy (Farrar, Straus & Giroux, 2018).

Coral Bell School of Asia Pacific Affairs

Hedley Bull Building

130 Garran Road

The Australian National University, Acton ACT 2600 Australia